In which I try to explain what AI is to my 90-year-old dad,

and why he should care….

I’ve been using ChatGPT on an almost daily basis for over two and a half years now, in my writing (proof-reading and copy-editing), research, and learning. I’ve also used it to run a Monte-Carlo simulation on a game design, and to translate & transcribed 80-year-old letters from my grandfather.

I would characterize it as a very smart but indiscriminate five-year-old running around with a sharp knife. It can be useful, but it definitely needs adult supervision, and it’s growing up fast.1

At it’s simplest AI is a language prediction machine that’s eager to please. Much like predictive text or spell checking on your phone, but supercharged. It gets ‘trained’ by crunching through all the world’s writing (and code and pictures and movies) to be able to give us the responses it thinks we want.

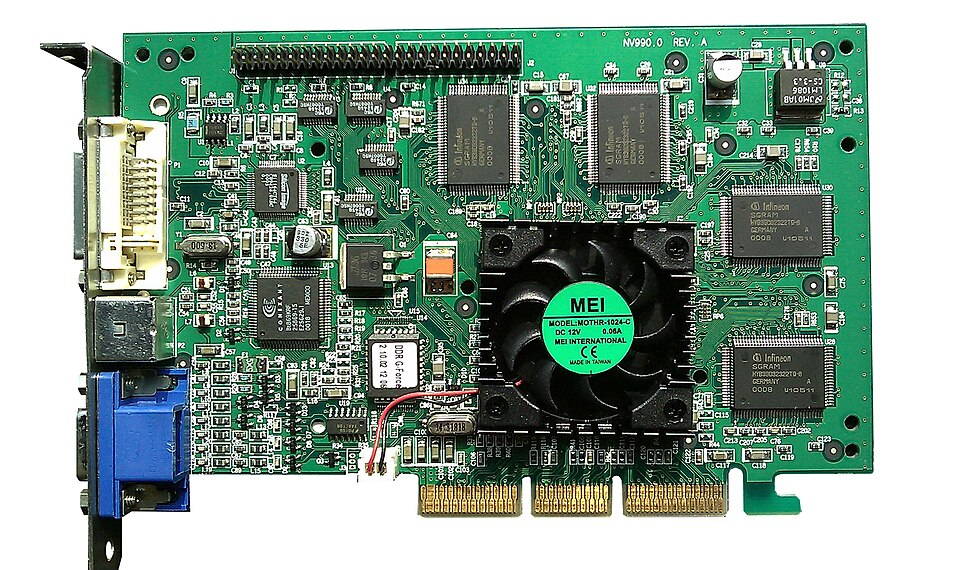

The math is interesting, and now I wish I’d paid closer attention in my linear algebra course at University. I might have gotten rich being an animation studio geek, since the Graphic Processing Units (GPUs) that AI relies on were originally created to offload the chore of rendering images from the motherboard of your desktop computer for video games and the like. These boards are optimized to figure out how far things are from each other (objects in space, words in vocabulary), where the light is coming from and how the shadows are being cast (ray-tracing) quickly. These functions are easily adapted to AI, and advances in GPU chip design and manufacturing are now part of the global arms race.

AI ‘maps’ how far words, phrases, concepts, etc. are away from each other (metaphorically) by churning through all the world’s writing (training data) so it can make its predictions about what’s supposed to come next. For example, in everyday usage, the phrase “A quick, brown” is almost always followed by the word “fox”, and therefore are ‘close’ to each other. “My mother” and “the car” are less likely, but still possible23.

GPUs consume a lot of power and generate a lot of heat, more than regular computer chips. Stack thousands of them together in thousands of server farms, at an industrial scale, and you get a global scale increase in CO2 emissions (for power generation) and water consumption (for cooling).

In practice, when you first use ChatGPT or one of its cousins, it is fun and easy. It is pleasant and accommodating, flattering and eager. But soon you’ll see a pattern of “fluffing“: irritating and unnecessary, continuous praise. And because its a prediction machine that only approximates reality, it will also make up answers that sound correct but aren’t. It will often fabricate instead of just saying it doesn’t know. It probably doesn’t know the difference. For example, there’s now a database of hallucinated case law.

You can train and direct AI to give “better” answers, more “truthful” answers (kind of important in things like autonomous kill vehicles or nuclear power plant management), but in the end it is merely (but convincingly) a predicting and imitating machine trained on whatever data was available, publicly or otherwise.

This is the source of accusations of intellectual property theft, including using pirated, copyrighted material to train AIs. Do two wrongs make a right? Probably not. Some argue that if AI isn’t allowed to derive its knowledge from previous work then nor should any of the world’s authors, visual artists, and researchers. I don’t agree, but there are bigger issues.

AI is an evolution of human information technologies (speech, writing, movable type, radio & television, the internet…), but it’s also fundamentally different. Just as each subsequent information tool was fundamentally different from its predecessor. And with that difference, each brought their own disruptions.

How is AI different? One way is that it doesn’t need a human to make decisions or be creative. It can make its own decisions. It engages in creative problem solving. Newspapers in and of themselves don’t make decisions – their human editors do. Radios and televisions don’t engage in creativity – their human writers and actors do. And as we turn more and more of our decision making power over to machines in the pursuit of efficiency, advantage, and profit, as we take humans out of the decision making loop and those decision become more and more opaque to their human creators, we are creating a wave of more and more disruption.

Some people think AI is similar to previous technologies. It’s just a technology, they argue. A tool that can be used for good or bad. Like fire, or a hammer. It’s not…and not just because it can make its own decisions, or because we don’t understand what it’s doing.

Stay tuned for next week’s journal entry: the good, the bad, and the ugly of AI.

- My buddy Karl has a different definition: “I lean toward the autistic bookworm who after being exposed to everything ever written is locked in a vault where it has no intuitive knowledge of the outside world and how you relate to it. But this entity makes for one hell of a personal assistant, albeit a flawed one that sometimes needs everything explained to it.” ↩︎

- “My Mother the Car” is considered one of the worst television shows of all time, but “Herbie the Lovebug” won awards. The human mind is weird and wonderful, which makes creating an artificial intelligence not straightforward. ↩︎

- There’s more to it of course, like machine learning, image recognition, robotics, natural language processing and many other sub-fields and emerging fields of study. ↩︎

Leave a Reply to It’s Not That Bad? – Practical Managers Cancel reply